8 bits for a Byte: The organizations winning the agentic AI transition are not the ones with the most models. They are the ones that figured out, faster than their competitors, that deploying autonomous systems is a governance problem masquerading as an engineering problem. The Agentic Manifesto is the clearest articulation I have seen of why that distinction matters – and what to do about it.

This is not a technology brief. It is an operating model decision.

The ops hire that onboards in 30 seconds.

Viktor is an AI coworker that lives in Slack, right where your team already works.

Message Viktor like a teammate: "pull last quarter's revenue by channel," or "build a dashboard for our board meeting."

Viktor connects to your tools, does the work, and delivers the actual report, spreadsheet, or dashboard. Not a summary. The real thing.

There’s no new software to adopt and no one to train.

Most teams start with one task. Within a week, Viktor is handling half of their ops.

Let’s Get To It!

Welcome To AI Quick Bytes!

Bit 1: The Governance Gap Is Your Actual Risk Surface

The old bargain of software engineering – specify the logic, control the outcome – was a risk management framework as much as a technical methodology. It gave leaders a credible answer to "how do we know it will behave correctly?" The answer was: because we wrote the spec, and the code is deterministic.

Agentic systems invalidate that answer. Behavior now emerges from models, context, tools, prompts, retrieval pipelines, and runtime conditions. The strategic response is not to patch the old framework. It is to replace the unit of control – from authored logic to bounded behavior. Engineering discipline does not disappear. It upgrades. Your governance obligation expands.

Leaders who treat this as a technical nuance will be explaining behavioral incidents to boards that were never briefed on the architecture change that made them possible.

The risk surface is no longer what code does; it is what the system interprets and decides.

Legacy assurance frameworks create false confidence in agentic contexts.

Bounded behavior governance is a leadership obligation, not an engineering option.

Strategic Mandate: Convene your risk, engineering, and product leadership in one room and answer this question: "What is our current framework for governing emergent AI behavior?" If the answer takes longer than two sentences, you have structural work to do.

Trade from the subway, golf course, or toilet

Liquid enables users to go long or short on any market from your phone or desktop 24/7.

No matter what time of day it is or where you are, you can monitor the situation with Liquid.

Bit 2:

Quote of the Week:

The deterministic era gave you a machine. The agentic era gives you a society.

Bit 3:

Your Delivery Architecture Was Not Built for This Asset Class

The SDLC is one of the most successful institutional frameworks in the history of technology. It was designed to deliver deterministic artifacts – things that behave identically every time, whose quality can be verified before release. That is not what your agentic systems are. They are adaptive runtime entities that respond to context, memory, and tool access in ways that cannot be fully specified upfront.

Treating agents like software products does not reduce complexity – it hides it. The manifesto pairs this architectural argument with a performance measurement argument: DORA-style throughput metrics remain relevant, but they are no longer sufficient. A team can execute flawlessly on velocity and still ship behavioral drift, eroded trust, and undetected governance risk. The delivery scorecard and the governance scorecard are different instruments. Most organizations are running only one of them.

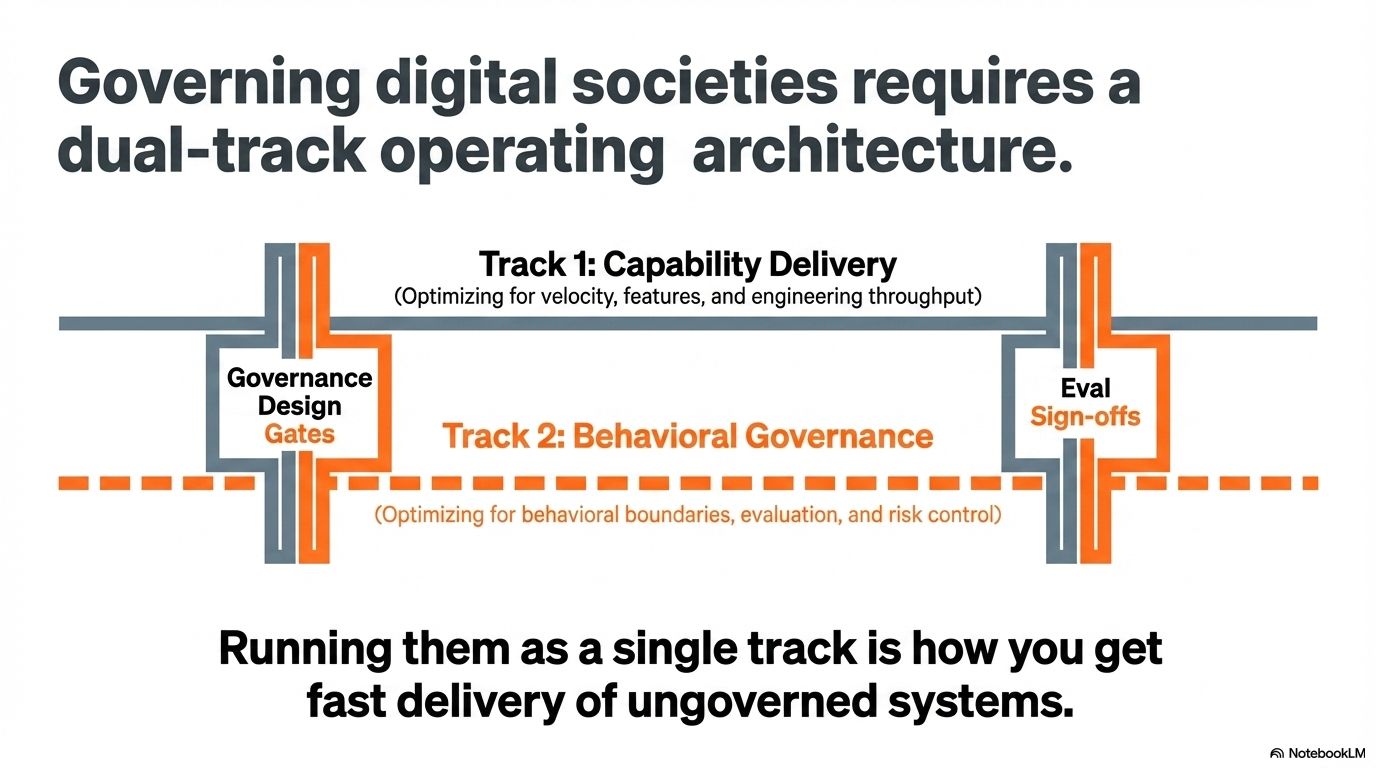

The strategic implication is straightforward: your operating model needs two distinct tracks – one optimizing delivery, one governing behavior. Running them as one track is how you get fast delivery of ungoverned systems.

SDLC excellence and behavioral governance excellence are orthogonal capabilities.

A single delivery scorecard creates a blind spot the size of your AI risk surface.

The operating model requires a second track explicitly designed for adaptive entities.

Strategic Mandate: Add a "behavioral governance" column to your AI program review dashboard before the next board presentation. If your team cannot populate it, that is the most important finding in the review.

Bit 4: Silent Failures Are a Board-Level Risk

Operational risk in agentic systems does not typically arrive as a loud failure. It arrives as behavioral drift – quiet, accumulating, and consequential long before it surfaces in any dashboard. The manifesto calls this the "Determinism Gap," and its four failure modes are precisely the kind of risk that board members ask about after the incident, not before.

Binary pass/fail testing misses judgment errors – answers that are factually correct and contextually wrong. Regression becomes invisible when prompt or retrieval changes shift behavior without triggering alerts. Accountability dissolves when "the model hallucinated" becomes an acceptable incident summary. And complexity compounds in multi-agent pipelines where emergent goal conflicts arrive without warning. None of these failures announce themselves. That is what makes them strategically significant. The organizations that have instrumented for these failure modes hold a durable advantage over the ones that discover them through incidents.

Judgment failures and behavioral drift are not detectable by standard QA.

"The model hallucinated" is an accountability gap, not an incident explanation.

Instrumentation for silent failures is a governance differentiator.

Strategic Mandate: Require your AI risk owner to produce a "silent failure inventory" for every production agent – the failure modes that would not appear on a standard monitoring dashboard but could materially affect business outcomes or regulatory posture.

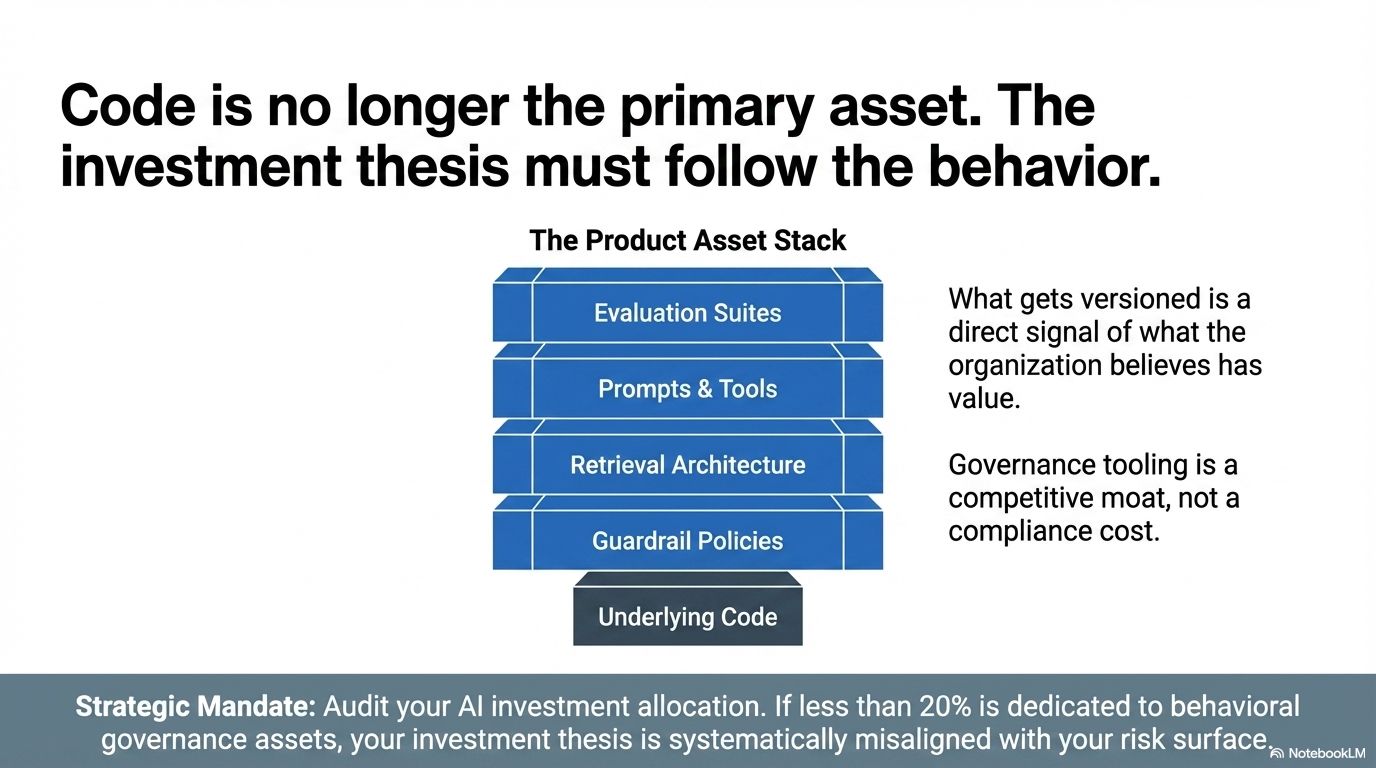

Bit 5: The Product Has Changed. The Investment Thesis Must Follow.

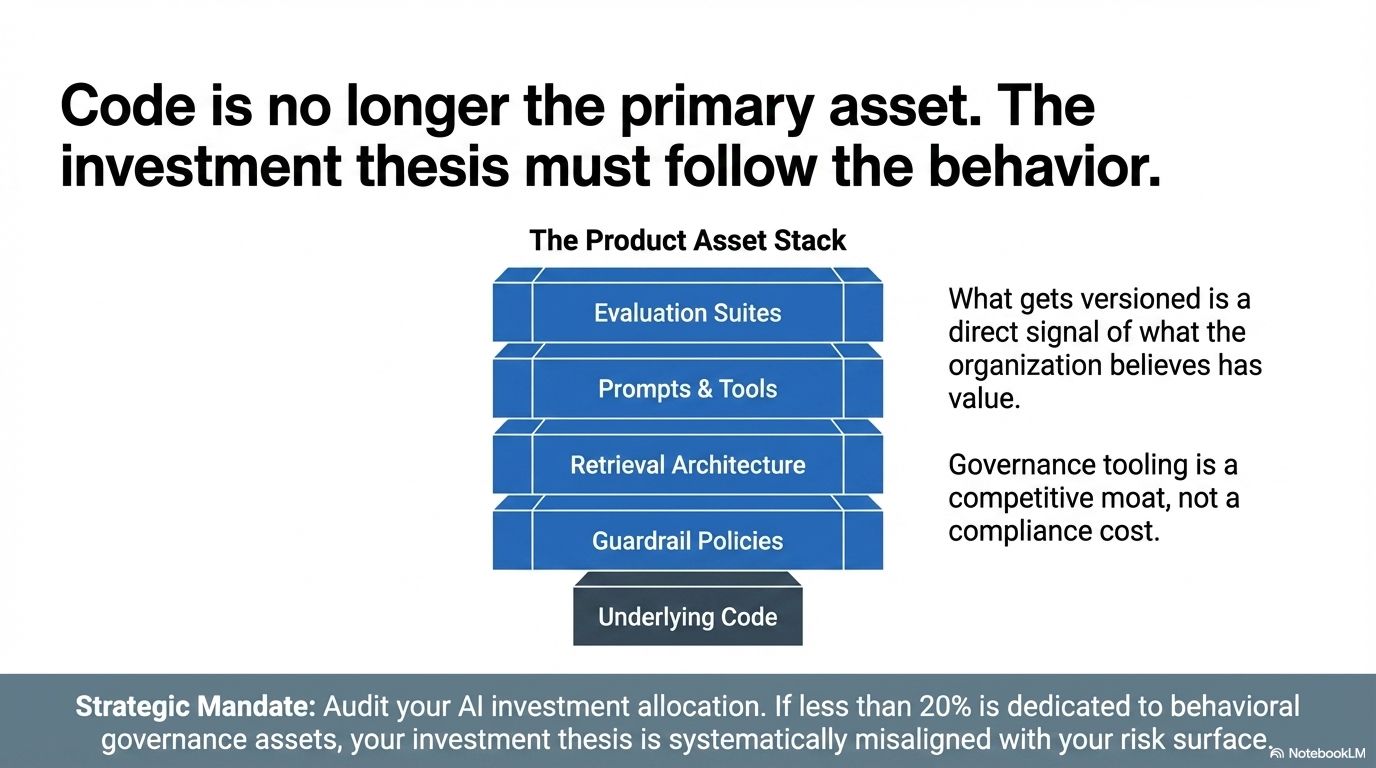

When what you ship is behavior – not just code – the assets that define product quality change. The manifesto makes this structural argument clearly: the agentic lifecycle centers prompts, tools, retrieval design, policies, and guardrails as first-class versioned assets. These are not supporting infrastructure. They are the product. What gets versioned is a direct signal of what the organization believes has value.

Most organizations are still funding their AI programs as though code is the primary asset. The roadmap is a code delivery roadmap. The versioning system tracks code. The quality gates measure code behavior. This investment architecture is systematically misaligned with the nature of the asset being built. Leaders who realign investment – toward eval suites, guardrail design, prompt engineering, retrieval architecture, and governance tooling – are not adding overhead. They are funding the actual product. The ones who recognize this before their competitors will have a governance moat that is genuinely difficult to replicate.

Prompts, policies, and retrieval pipelines are product assets requiring dedicated investment.

A code-centric investment thesis undervalues the actual sources of behavioral quality.

Governance tooling is a competitive moat, not a compliance cost.

Strategic Mandate: Audit your current AI investment allocation. What percentage is going toward behavioral governance assets – eval suites, guardrail design, monitoring infrastructure, governance tooling? If it is less than 20%, your investment thesis is misaligned with your actual risk and value surface.

Bit 6: Sunday Funnies

When Pressure Rises, Here’s Where Leaders Turn

Costs rise. Clients delay. Pressure builds.

The Survival Hub gives you practical ways to respond from cutting costs to tightening operations and staying on top of revenue.

Built to help you take control when things feel uncertain.

Bit 7: Governance Architecture Is a Design-Phase Decision

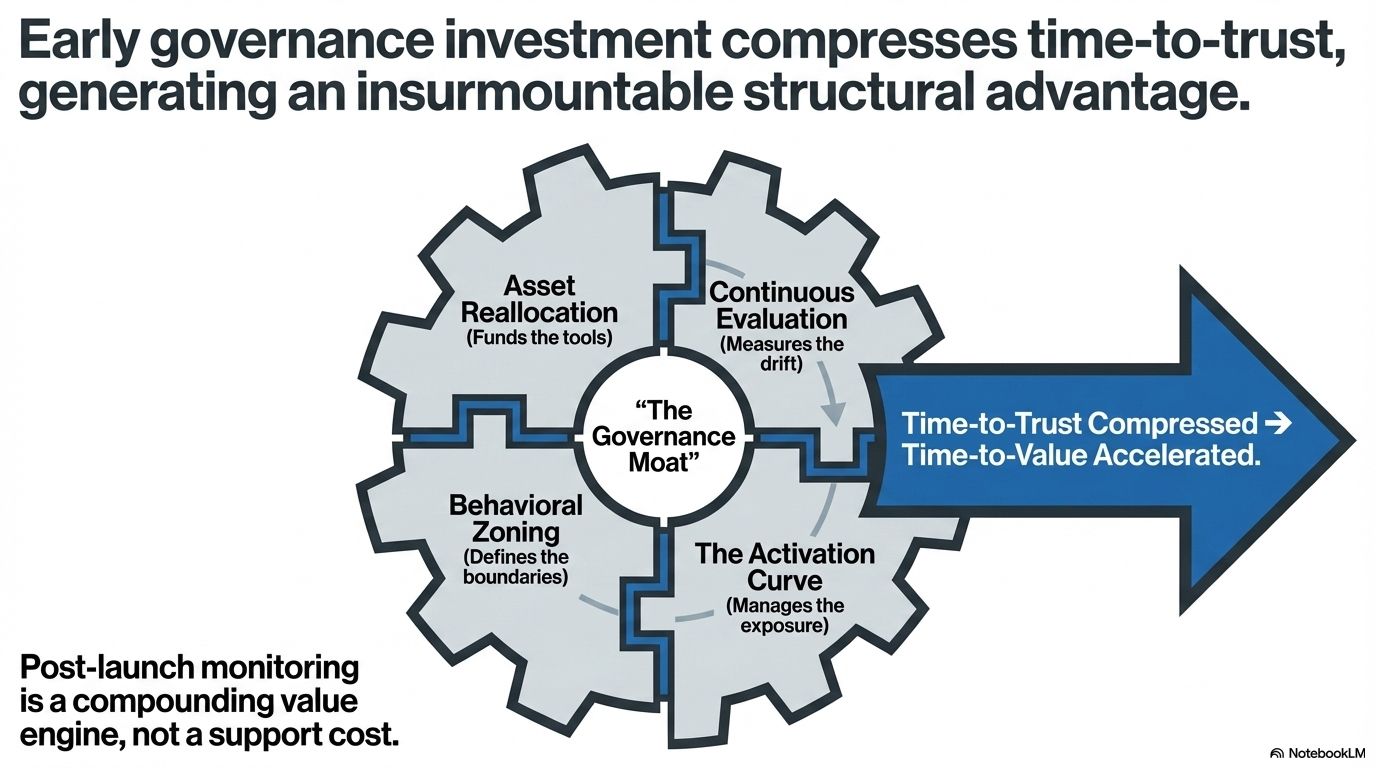

The most expensive governance decisions are the ones made after deployment. The manifesto makes the design-phase argument explicitly: governance starts before the first prompt is written. Guardrails are not a compliance wrapper applied at the end of the development cycle. They are an architectural input at the beginning of it.

The practical shift is from functional specification to behavioral zoning – defining the territory the agent can operate in, the behaviors it must never exhibit, and the escalation paths that return control to humans when decisions exceed the system's authorized scope. This is the governance logic of regulated industries, applied to AI system design. Organizations that adopt it early build agents that can be trusted faster. Organizations that discover the need for it after an incident pay for the redesign plus the incident.

Governance architecture – boundaries, prohibitions, escalation paths – must precede capability design.

Human-in-the-loop interception is a design artifact, not an incident response.

Early governance investment compresses time-to-trust, which compresses time-to-value.

Strategic Mandate: Institute a "governance-first design gate" for every new agent workflow. No feature design begins until the team has documented: the agent's authorized scope, three explicit prohibitions, and the human escalation path for out-of-scope decisions.

Bit 8: Evaluation Infrastructure Is a Strategic Asset

In every mature industry, the organizations with the best measurement infrastructure win. They detect problems earlier, improve faster, and make better resource allocation decisions. Behavioral evaluation infrastructure in agentic AI is the measurement advantage of this decade.

The manifesto argues for investment in continuous evaluation: adversarial prompts, red-team exercises, edge-case coverage, semantic intent scoring, qualitative assessment. The organizations that build this infrastructure early acquire compound advantages: they detect behavioral drift before it becomes visible, they can compare tuning changes with confidence, and they create shared truth across product, engineering, risk, and executive functions. The strategic argument is also a talent argument – engineers who know how to design rigorous evaluation suites are among the highest-leverage hires in the agentic talent market, and they are not staying long in organizations that treat evaluation as a compliance afterthought.

Behavioral evaluation infrastructure compounds advantage the way data infrastructure did in the last decade.

Shared evaluation truth creates organizational alignment that informal review processes cannot.

Evaluation capability is a talent attractor for the engineers who build reliable agentic systems.

Strategic Mandate: Assign ownership of evaluation infrastructure at the product leadership level – not the QA team. Require a minimum eval coverage threshold (recommend starting at 20 high-stakes scenarios per agent) before any production rollout receives executive sign-off.

Until next time, take it one bit at a time!

Rob

P.S. Thanks for making it to the end—because this is where the future reveals itself.

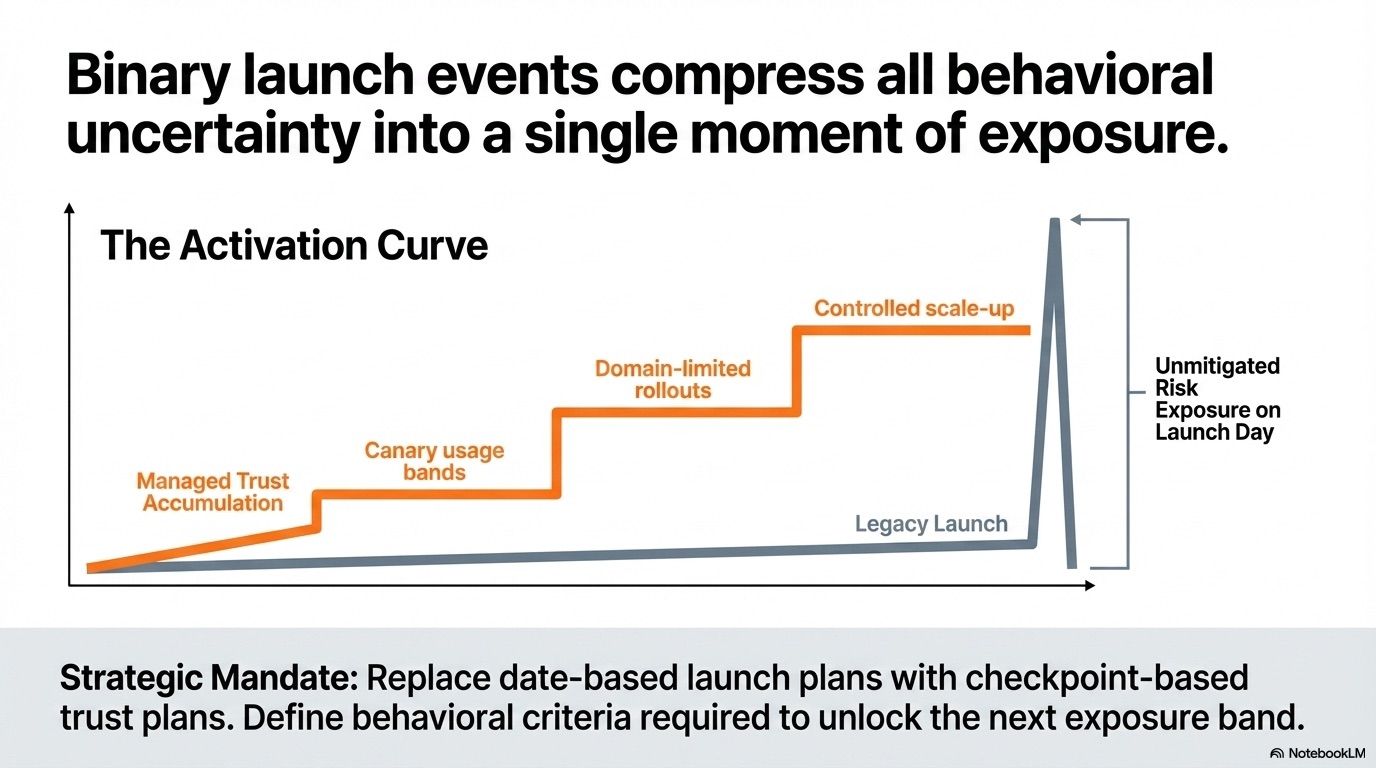

The Deployment Model Is Now a Risk Management Decision

Binary launch events – a date, a release, a go-live – are risk concentration events. They compress all behavioral uncertainty into a single moment of exposure. In agentic systems, where behavior is emergent and trust must be earned through observation, that risk concentration is unnecessary and avoidable.

The manifesto replaces the binary launch with an activation curve – phased deployment, behavioral checkpoints, canary usage bands, domain-limited rollouts, and controlled scale-up. This is not conservatism. It is accurate risk management for a non-deterministic asset class. The monitoring and tuning that follow are the mechanism by which the system's value compounds – every observation, context refinement, and behavioral adjustment improving performance over time. The organizations that redesign their rollout models around trust accumulation will find that their agentic systems improve faster and fail less visibly than competitors who deploy in batch.

Phased deployment converts binary risk events into managed trust curves.

Checkpoint-based rollouts create natural intervention points before exposure scales.

Post-launch monitoring is a compounding value engine, not a support cost.

Strategic Mandate: Replace date-based launch plans for every material AI deployment with checkpoint-based trust plans. Define the behavioral criteria that must be met at each checkpoint before the next exposure band opens.

Double Bonus This Week (I had too much to share)

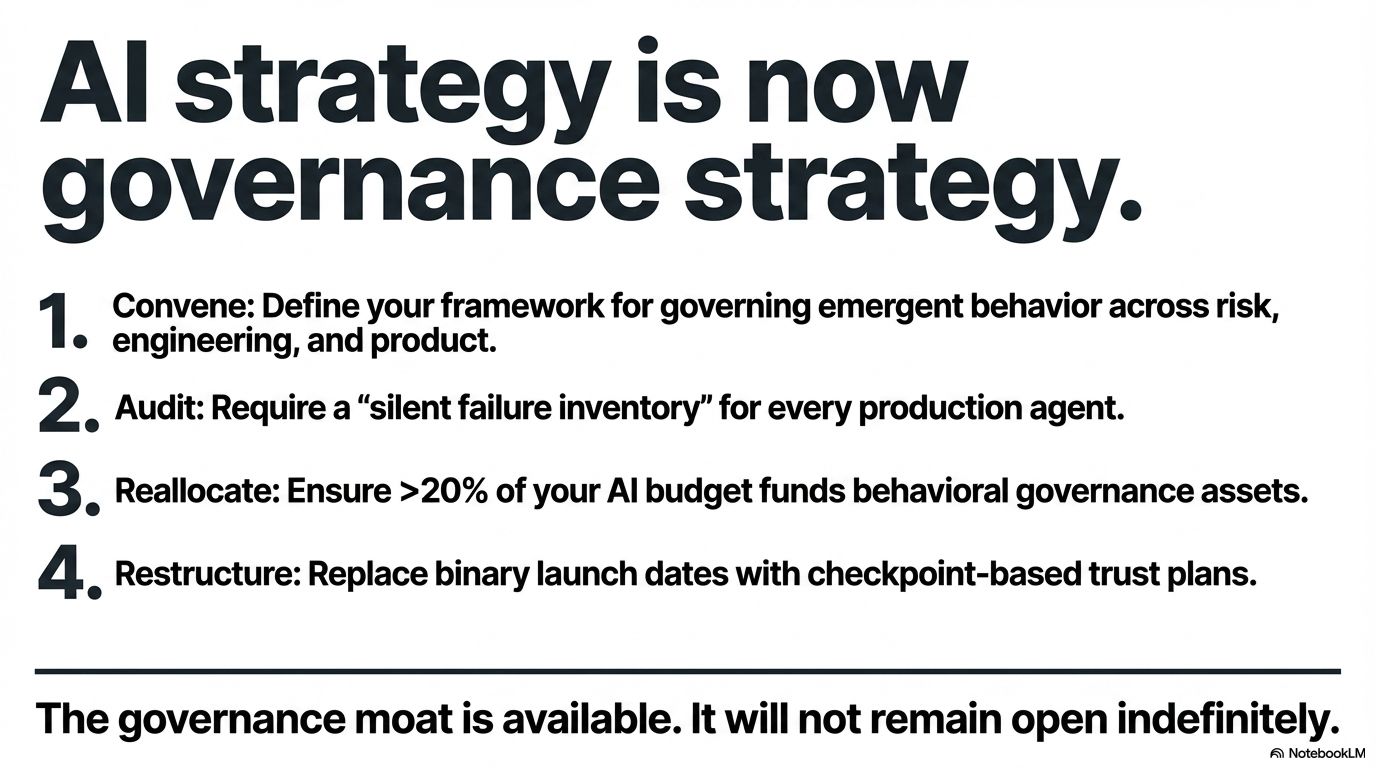

Governance Leadership Is the Emerging Structural Advantage

The final and most significant argument in the manifesto is organizational: engineering leaders in the agentic era are not building software. They are governing digital societies. The distinction is not rhetorical. It carries specific structural implications that separate the organizations building durable competitive positions from the ones still treating AI as a technology initiative.

Governing a digital society requires defining acceptable behavior at system design, not after the fact. It requires instrumenting accountability so that behavioral outcomes can be attributed and improved. It requires automating risk controls so that governance scales with system complexity. And it requires reconstituting the organization – roles, responsibilities, review cadences, systems of record – around the operating model that agentic delivery demands.

THE STRATEGIC SUMMARY

The Agentic Manifesto makes one argument with eight facets: the transition from software delivery to behavioral governance is not optional, and the organizations that make it deliberately will build advantages that are structural, compounding, and difficult to replicate. The investment implications are clear – guardrails, evaluation infrastructure, phased deployment, governance tooling, and operating model redesign. The leadership implication is equally clear: AI strategy is now governance strategy. Treat it accordingly.

The governance moat is available. It will not remain open indefinitely.